25G Ethernet–A New Trend for Future Network

As the requirement for bandwidth in Cloud data centers is increasing strikingly, networking and the Ethernet industry are moving toward a new direction, that is 25G Ethernet. It seems that 25GbE is more preferred and accepted by end users when comparing upgrade paths of 10GbE-25GbE-100GbE and 10GbE-40GbE-100GbE. Why choose 25GbE? What are the benefits of 25GbE? This tutorial will interpret 25G Ethernet in an all-sided perspective.

The Emergence of 25G Ethernet

Network engineers were once shocked at the idea of 10GbE link. Then, virtualization and cloud computing created new networking challenges requiring more bandwidth. Top of Rack (ToR) switches, typically the largest number of connections in data centers, are rapidly outgrowing 10GbE. Then the IEEE ratified a 40GbE and 100GbE standard to keep up with the demands, but 40GbE isn't cost-effective or power-efficient in ToR switching for cloud providers and the deployment of 100GbE is relatively difficult and costly. Against such a backdrop, 25G Ethernet standard was developed by IEEE 802.3 Task Force P802.3by, used for Ethernet connectivity that will benefit Cloud and enterprise data center environments. The 25GbE specification makes use of single-lane 25Gbps Ethernet links and is based on the IEEE 100GbE standard (802.3bj), achieving 100GbE through 4x25Gbps lanes.

25G Ethernet Optics & Cables

The 25GbE physical interface specification supports two main form factors—SFP28 (1x25 Gbps) and QSFP28 (4x25 Gbps). Commonly used transceivers are 25GbE SFP28.

The 25GbE PMDs (Physical Medium Dependent) specify low-cost, twinaxial copper cables, requiring only two twinaxial cable pairs for 25Gbps operation. Links based on copper twinaxial cables can connect servers to ToR switches, and serve as intra-rack connections between switches and/or routers. Fan-out cables (cables that connect to higher speeds and “fan-out” to multiple lower speed links) can connect to 10/25/40/50 Gbps speeds, and can now be accomplished on MMF (multimode fiber), SMF (single-mode fiber), and copper cables, matching reach-range to the specific application needs. Commonly used cables are 25GbE DAC and 25GbE AOC.

| Physical Layer | Name | Reach | Error Correction |

|---|---|---|---|

| Electrical Backplane | 25GBASE-KR | 1 m | BASE-R FEC or RS-FEC |

| Electrical Backplane | 25GBASE-KR-S | 1 m | BASE-R FEC or disabled |

| Direct Attach Copper | 25GBASE-CR-S | 3 m | BASE-R FEC or disabled |

| Direct Attach Copper | 25GBASE-CR | 5 m | BASE-R FEC or RS-FEC |

| Twisted Pair | 25GBASE-T | 30 m | N/A |

| MMF Optics | 25GBASE-SR | 70 m OM3 / 100 m OM4 | RS-FEC |

Table 1: Specification of 25GbE Interfaces.

Why Choose 25G Ethernet?

While 10GbE is fine for many existing deployments, it cannot efficiently deliver the needed bandwidth but requires additional devices, significantly increasing expenses. And 40GbE isn't cost-effective or power-efficient in ToR switching for Cloud providers. Thus, 25GbE was designed to break through the dilemma.

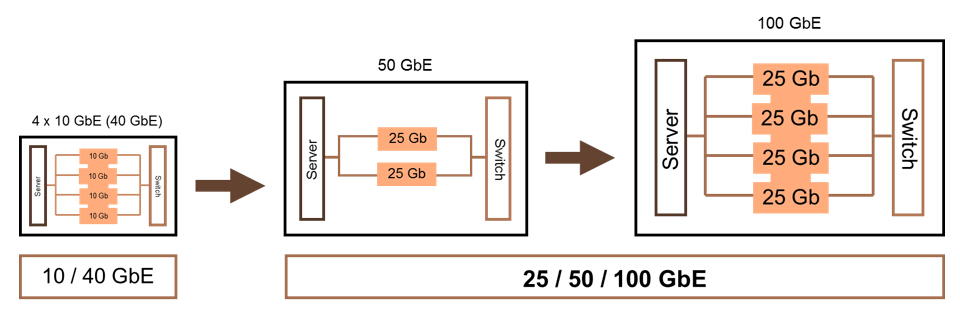

Number of SerDes Lanes

SerDes (Serializer/Deserializer) is an integrated circuit or transceiver used in high-speed communications for converting serial data to parallel interfaces and vice versa. The transmitter section is a serial-to-parallel converter, and the receiver section is a parallel-to-serial converter. Currently, the rate of SerDes is 25 Gbps. That’s to say, only one SerDes lane at 25Gbps is needed to connect one end of 25GbE card to the other end of 25GbE card. In contrast, 40GbE needs four 10GbE SerDes lanes to achieve connections. As a result, the communication between two 40GbE cards requires as many as four pairs of fibers. Furthermore, 25G Ethernet provides an easy upgrade path to 50G and 100G networks.

Figure 1: Numbers of Lanes Needed in Different Gigabit Ethernet.

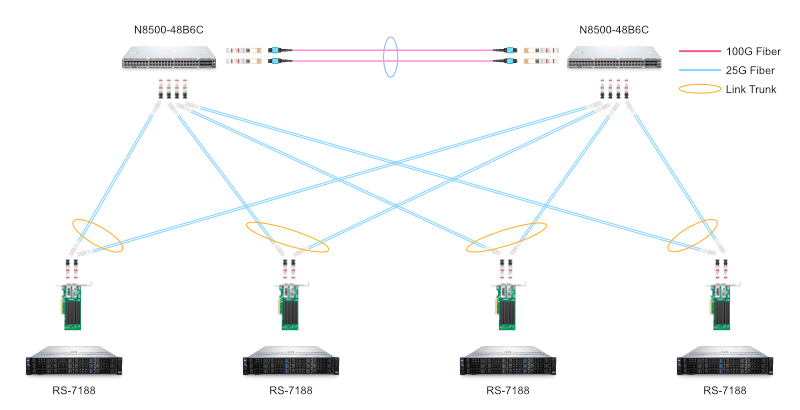

More Efficient 25GbE NIC for PCIe Lanes

At present, the mainstream Intel Xeon CPU only provides 40 lanes of PCIe (PCI Express) 3.0 with a single-lane bandwidth of about 8 Gbps. These PCIe lanes are used for not only communications between CPU and network interface cards (NIC), but also between RAID (Redundant Array of Inexpensive Disks) cards, GPU (graphics processing unit) cards, and all other peripheral cards. Therefore, it is necessary to increase the utilization of limited PCIe lanes by NIC. Single 40GbE NIC needs at least one PCIe 3.0 x8 lane, so even if two 40GbE ports can run at full speeds at the same time, the actual lane bandwidth utilization is only: 40G*2 / (8G*16) = 62.5%. On the contrary, 25GbE NIC card only needs one PCIe 3.0 x8 lane, then the utilization efficiency is 25G*2 / (8G*8) = 78%. Apparently, 25GbE is significantly more efficient and flexible than 40GbE in terms of the use of PCIe lanes.

Figure 2: 25G NIC Deployment.

Lower Cost of 25GbE Wiring

40GbE cards and switches use QSFP+ modules with relatively costly MTP/MPO cables not compatible with LC optical fibers of 10GbE. If upgrading to 40GbE based on 10GbE, most of the fiber optic cables will be abandoned and rewired, which can be a huge expense. In comparison, 25GbE cards and switches use SFP28 transceivers and are compatible with LC optical fibers of 10GbE due to a single-lane connection. If upgrading from 10GbE to 25GbE, rewiring can be avoided, which turns out to be time-saving and economical.

Distinct Benefits of 25GbE for Switch I/O

Firstly, 25G Ethernet has an excellent maximum switch I/O (Input/Output) performance and fabric capability. Web-scale and Cloud organizations can enjoy 2.5 times the network bandwidth performance of 10GbE. Delivered across a single lane, 25GbE also provides greater switch port density and network scalability. Secondly, 25GbE can reduce capital expenditures (CAPEX) and operating expenses (OPEX) by significantly cutting down the required number of switches and cables, along with the facility costs related to space, power, and cooling compared to 40GbE technology. Thirdly, 25GbE using a single lane 25Gbps Ethernet link protocol leverages the existing IEEE 100GbE standard which is implemented as four 25Gbps lanes running on four fiber or copper pairs.

Future 25G Ethernet Market Forecast

In the past few years, 25G Ethernet has received more and more recognition, and 25GbE products have undergone significant developments and occupied an increased market share. 25GbE is expected to seek a broader market in 2020 and will keep thriving in the future. In the long run, 25GbE is predicted to be a future-proof trend in high-speed data center networks since 25GbE adapter can also run at 10GbE speeds, and 25GbE switches offer a more convenient way to migrate to 100G or even 400G network, bypassing the 40GbE upgrade. While the need for industry consensus building cannot be underestimated either. At present, 25GbE is mainly used for switch-to-server applications. If switch-to-switch applications can be largely promoted, 25G Ethernet may go further. In a word, the move to 25GbE is accelerating.

Conclusion

No matter the market research or the attitude of users, 25G Ethernet seems to be the preferred option down the road, as it costs less, requires lower power consumption and provides higher bandwidth. In view of the realistic benefits of 25G Ethernet, it is expected to go further in the future beyond question.

You might be interested in

Email Address

-

PoE vs PoE+ vs PoE++ Switch: How to Choose?

Mar 16, 2023