Data Center Optical Transceiver Focus: Trends, Challenges & Influences

With the fast development of optical communication and increasing investment on optical devices in recent years, the global optical transceiver market has developed rapidly as well. The global optical transceiver market took USD 6.3 billion in 2018, an increase of 12.5% over 2017. And it is expected to reach USD 7.1 billion in 2020. The development of data center modules are closely related to the optical equipment and their connection methods. These two determine whether and how many optical transceivers will be needed in the data center.

Optical Transceiver Trends in Data Center

Since the needs for higher rates are increasing because of applications like Cloud compute and 5G, data center equipment in different locations must adapt to that change and migrate to higher rates accordingly. For example, as the server access switch speed migration shows below, the enterprise server access gradually changed from 1G-10G to 10G-25G in 2014. While, the mega cloud server access gradually changed from 10G-40G to 25/50G-50/100G in 2018.

| Network Location | 2014 | 2016 | 2018 |

|---|---|---|---|

| Mega Cloud server access | 10GE-40GE | 40GE-25/50GE | 25/50GE-50/100GE |

| Tier 2/3 Cloud server access | 1GE-10GE | 10GE-25GE | 25GE-50GE |

| Enterprise server access | 1GE-10GE | 1GE-10GE | 10GE-25GE |

Since the equipment is becoming more advanced with higher rates, it requires the modules must have the same data rates to assure the overall network performance. The transceiver bandwidth for the data center campus has been upgraded from 40G to 100G and even higher since 2008. These modules are designed to support various applications such as the 40G-LR4 for 10km transmission and the 100G-CWDM4 for 100G 2km transmission.

| Upgrade Path | 2008–2014 | 2013–2019 | 2017–2021 | 2019~ |

|---|---|---|---|---|

| Data Center Campus | 40G-LR4 | 40G-LR4; 100G-CWDM4 | 100G-CWDM4 | 200G-FR4 |

| Intra-Building | 40G-eSR4; 4x10G-SR | 40G-eSR4 | 100G-SR4 | 200G-DR4 |

| Intra-Rack | CAT6 | 10G AOC | 25G AOC | 100G AOC |

| Sever Data Rate | 1G | 10G | 25G | 100G |

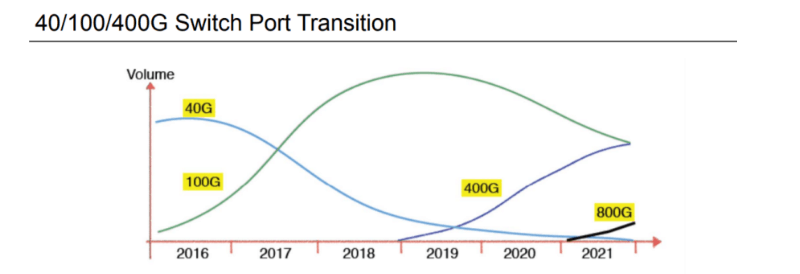

As time goes by, data center modules with higher rates like 400G or 800G will become more popular. The following figure unveils the trends of a switch port transition from 2016-2021. It is clear that 100G is mainstream from 2017 to 2019. 400G will be thriving from 2019, followed by 800G starting from 2021. During the period, 200G is a flash in the pan compared to 400G. Therefore, 200G is not added in the figure.

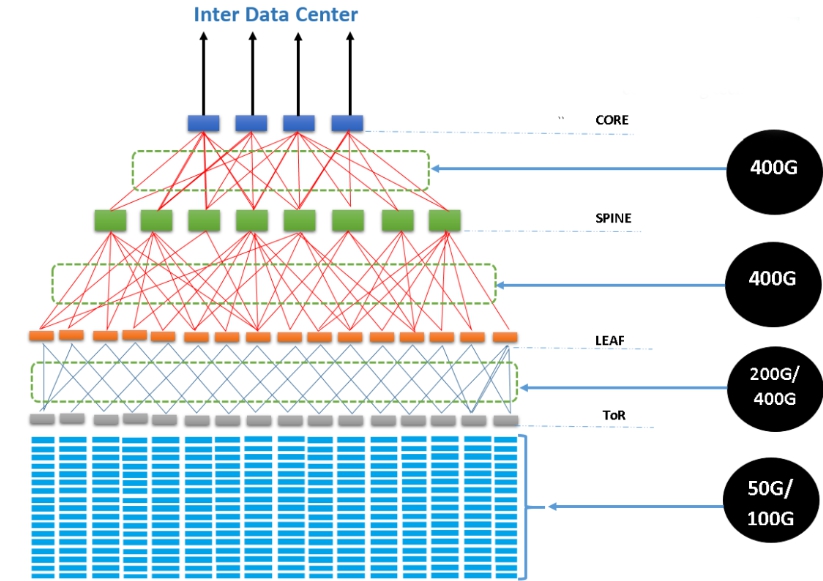

Furthermore, the data center is adopting spine-leaf structures more than the traditional three-tier structure. The new structure can maximize the connectivity between servers, forcing the data center to move to interconnects at higher data rates and enhancing the fast deployment of higher-speed data center transceivers. Here is a data center spine-leaf structure with 200G and 400G deployed in an expected way.

Challenges for Data Centers Optical Transceivers

With the fast development of data centers, there will be more severe challenges on how to design, manufacture, use and maintain the transceivers.

Cost Control

It is not easy to control the transceiver cost. The growth of data center traffic accelerates the need for next-generation networking equipment. These devices must support higher port density and faster speed. And this pushes the need for large-scale deployment of optical modules. However, with the increase of port speed, the cost per bit will rise as well, especially in transceivers, which take a large part of the total hardware cost. For example, the price of a third-party 400G transceiver reaches several thousands of dollars, let alone an OEM one. And if network service providers need to deploy 400G networks on a large scale, the cost will be extremely high.

Module Design and Manufacturing

Nowadays, many high-rate applications like 5G result in the increase of port speed in data center switches and servers, which cause the ASIC capacity of the servers and switches to increase. And then the electrical signal rate will migrate from 25Gb/s to 50Gb/s, 100Gb/s or higher to enable all of the capacity to enter or exit the ASIC. Each time the rate increases, the challenge in moving the electrical signals across a linecard to the front panel of the router or switch becomes much greater, leading to the increased speed need of fiber optical modules as well as the complex of module design and manufacturing.

Interoperability

The devices in a data center are normally provided by different suppliers. Although there are standard interface requirements for optical transceivers, optical modules offered by different vendors come with various module codes. Transceivers offered by one manufacturer may not be compatible with other manufacturers. Even if the third-party transceiver vendors have gradually entered to solve the problem, the vendor-lock situation and interoperability of these modules remain as big issues.

Sustainability

Data center equipment generates a lot of heat while working. The heat is difficult to dissipate and will make the indoor temperature rise sharply. This will leave the physical equipment in an extremely unfavorable high temperature environment, which can easily cause machine failure or even downtime. In data center systems, high-density optical modules also generate a certain amount of heat, which has negative effects to cool the data center. Therefore, it is particularly important to reduce module power consumption with the increase of data rate. High-speed, high-density and low-power optical module support will become an important measure for the sustainable development of data centers.

What Influences Will Data Center Transceivers Bring About?

With the need for high data rate transceivers in the near future, the market share of OEM modules will get smaller because of their high cost and the not so mature market. At the same time, the third-party transceivers are likely to rise. In addition, it requires a higher level for network device providers, who will have to provide devices of wide compatibility.

You might be interested in

Email Address

-

PoE vs PoE+ vs PoE++ Switch: How to Choose?

Mar 16, 2023